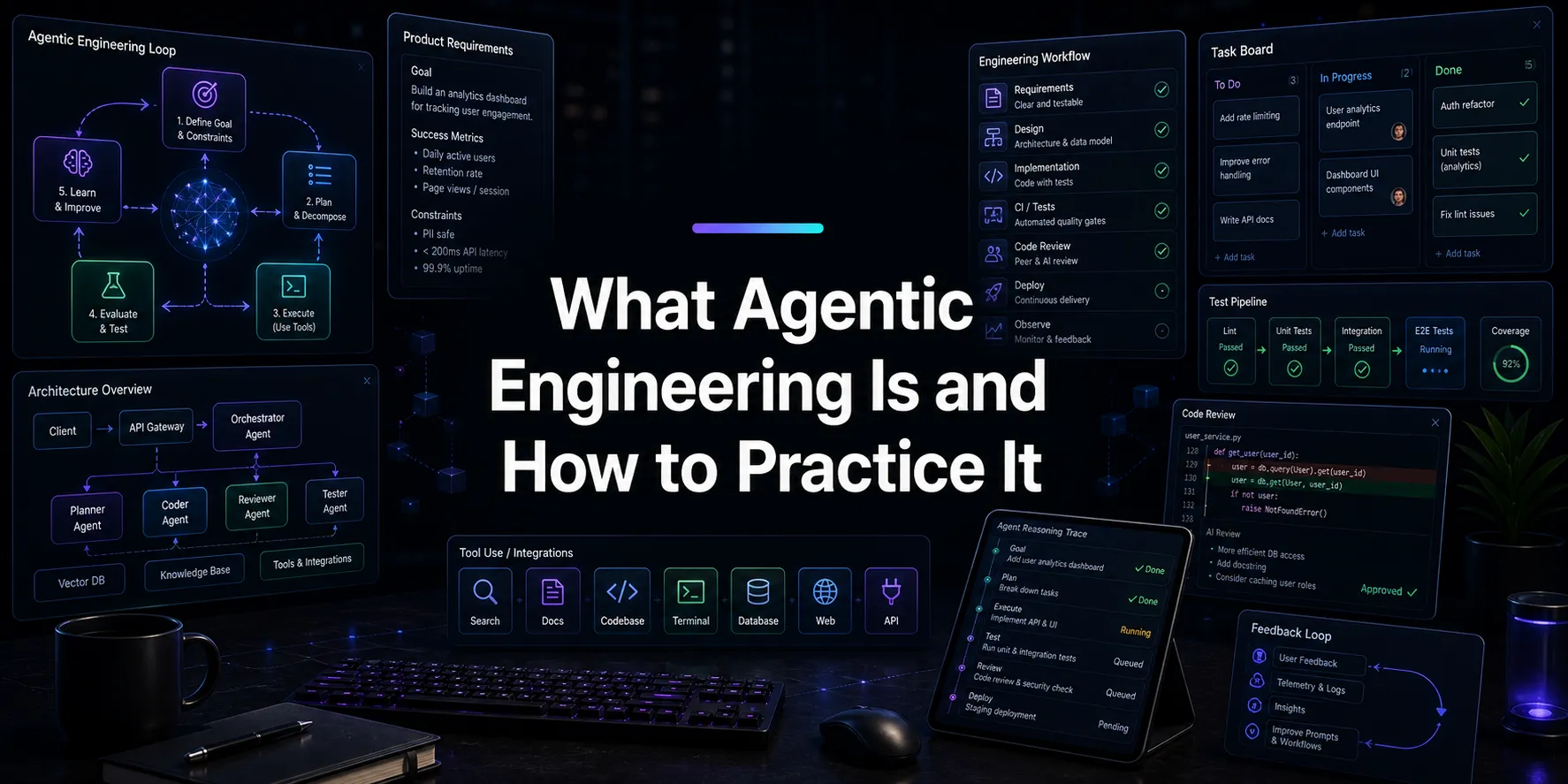

What Agentic Engineering Is and How to Practice It

Agentic engineering is a software workflow built around delegation, structured context, parallel execution, and rigorous review. Here is how to do it well.

Agentic engineering is a way of organizing software work around capable AI agents without giving up engineering discipline.

Instead of treating the model as a faster autocomplete engine or a one-off code generator, you treat it as an execution layer that can carry out substantial units of work when it is given the right context, the right boundaries, and the right review process. That changes how features get specified, how work gets decomposed, how parallelism is introduced, and how output is verified before it lands.

This article lays out what agentic engineering is, what the process looks like in practice, and why a workspace like Nimbalyst is useful for doing it well.

What Agentic Engineering Actually Means

Agentic engineering is the practice of delegating meaningful engineering work to AI agents within a structured workflow that preserves intent, context, reviewability, and control.

That definition has four important implications.

First, the unit of work is larger. You are not asking for isolated code snippets. You are asking an agent to implement a feature slice, investigate a bug, refactor a boundary, update documentation, or prepare a reviewable change set.

Second, context has to be explicit. The agent needs the spec, the constraints, the relevant files, the desired architecture, the acceptance criteria, and often supporting artifacts such as a mockup or diagram. Good results come from well-structured context, not from charisma in the prompt.

Third, execution has to be decomposable. If everything lives in one giant session with one overburdened agent, the workflow does not scale. Agentic engineering becomes interesting when work can be broken into coherent streams that run in parallel and later converge.

Fourth, review has to be first-class. The output of an agent is not production-ready because it exists. It becomes usable when it has been examined against the original intent and against the standards of the codebase.

Agentic engineering is software process design for a world in which capable agents can take on real implementation work.

The Process

The process usually has five stages: specification, artifact creation, decomposition, execution, and review. Different teams collapse or expand these stages, but the underlying structure stays the same.

1. Specification

The process starts with a spec.

The spec should define the problem, constraints, acceptance criteria, and relevant context. If the change touches authentication, analytics, a rendering pipeline, or a deployment boundary, those details need to be in the brief. If there are conventions the team expects the agent to follow, those need to be explicit as well.

This is why markdown remains so useful in agentic workflows. It is readable, diffable, easy to revise, easy to version, and easy to keep adjacent to the code itself. We have argued elsewhere that one plan document is often more useful than scattered documents across several tools. The reason is simple: the plan is not background material. It is an executable source of context for the human and the agent.

At this stage the goal is not verbosity. It is clarity. The agent should be able to answer three questions from the document alone: what are we building, what constraints matter, and how will success be judged.

2. Artifact Creation

Many changes need more than text.

If a feature has a user interface, a mockup materially improves the quality of delegation. If it changes a system boundary, a diagram reduces ambiguity. If it affects data flow, a schema or data model is often more useful than another paragraph of prose. If it has rollout or sequencing concerns, a task breakdown becomes part of the spec rather than an afterthought.

This is where many agent setups still feel immature. The agent may be able to read code well enough, but the surrounding artifacts live elsewhere and are hard to keep aligned. The more your design intent is scattered across Figma, diagrams, notes, tickets, and chat threads, the more likely the agent is to act on partial context.

In agentic engineering, these artifacts are part of the control surface. They make the work legible before implementation begins and make it easier to review whether the implementation matches the intended behavior later on.

3. Decomposition

Once the target is clear, the work gets decomposed.

A meaningful feature often contains several distinct workstreams: an API change, a frontend implementation, a test update, a migration, a documentation pass, perhaps an analytics or instrumentation change. These workstreams do not all need to be done by the same agent in the same session.

The decomposition needs to be practical. The tasks should have clear ownership, limited overlap, and a shared reference back to the source spec. Each agent should know what slice it owns and what assumptions it should not violate. When that is done well, parallelism becomes an advantage rather than a source of chaos.

This is also where session management becomes part of engineering, not just convenience. If you are running several agents against the same repository, you need to know which session is handling which workstream, which branch or worktree it belongs to, what state it is in, and whether it is blocked or ready for review. Otherwise the system collapses into hidden concurrency.

4. Execution

Execution works because the earlier stages did their job.

At this point the agent should be able to read the relevant code, understand the surrounding artifacts, make the necessary changes, run tests, and report what it did. If the task was scoped correctly, the output is bounded enough to review without being trivial.

This is also where isolation matters. We have written about running parallel AI coding agents and about what breaks when worktrees exist without the management layer around them in Parallel Claude Code Agents: What Breaks After Worktrees. The lesson is that the worktree is necessary but not sufficient. Isolation prevents collisions. It does not, by itself, create coordination.

Good execution in an agentic workflow therefore includes status visibility. You want to know which tasks are in progress, which are waiting on human input, which have produced reviewable diffs, and which have failed. That sounds procedural because it is procedural. Engineering work does not become less procedural because an AI is doing part of it.

5. Review

Review is the decisive stage.

The standard should be simple: the agent’s output needs to be understandable, inspectable, and reversible. If the team cannot tell what changed and why, the workflow is not good enough. If the output looks plausible but cannot be checked against the intent, the workflow is not good enough. If review happens by reading walls of terminal text and hoping nothing important was missed, the workflow is not good enough.

This is why visual diff review matters. Code can be reviewed as code, but not everything the agent touches is code. Plans, markdown, diagrams, mockups, and data models also need to be reviewed in forms that make sense for the artifact. The more the agent participates across file types, the less adequate a purely textual review surface becomes.

The purpose of review is to validate that the work fits the architecture, satisfies the acceptance criteria, and does not introduce avoidable complexity or risk. In practice, that means checking the design intent, the code changes, the tests, and the user-facing behavior as one connected unit.

Where Nimbalyst Fits

Nimbalyst puts the human and the agent in the same working set of artifacts: the spec, the plan, the mockup, the diagram, the code, the sessions, and the review surface. When those are scattered across separate tools, you introduce friction at every handoff. We wrote about that more broadly in From 7 Tabs to One Workspace, but the point is especially acute once multiple agents are involved.

In practical terms, Nimbalyst supports agentic engineering by giving you:

- a markdown workspace for specs and plan documents

- visual editors for mockups, diagrams, and structured artifacts

- session management for multiple active agents

- worktree-oriented execution across parallel streams

- visual review across the files those agents change

- one place to keep the human process and the agent process aligned

That matters because the process described above is only robust when the handoffs are tight. The spec has to remain near the implementation. The design artifact has to remain near the review. The session has to remain near the task it is executing. The output has to remain near the acceptance criteria it is being judged against.

Without that, the agent can still produce code, but the workflow stays brittle.

A Practical Way to Start

A reasonable way to adopt agentic engineering inside a team is to start with one feature and make the process explicit.

Write the spec in markdown. Add a mockup or diagram if the feature needs one. Break the work into a few discrete streams. Run those streams in separate sessions. Review each output against the source artifacts. Only then merge.

That is enough to expose where your current workflow is weak. Most teams immediately discover one of three problems: their specs are too thin, their decomposition is poor, or their review process is not built for agent-generated change volume. Those are all fixable. In fact, discovering them is part of the point.

If you want the shorter, tactical version of this operating model, our best practices for coding with AI agents cover the day-to-day discipline. The larger argument here is that those practices are not isolated tips. Together they form a coherent engineering process.

Summary

Agentic engineering is worth taking seriously because it changes the economics of software work. It increases the amount of implementation that can be delegated, but it also raises the importance of architecture, scoping, specification, and review. Teams that handle those well will get disproportionate leverage from agents. Teams that do not will mostly generate more output and more confusion.

The process is not mysterious. Define the work clearly. Create the right artifacts. Decompose the problem. Run the agents in isolated streams. Review the output carefully. Keep the whole loop in a workspace that makes those handoffs efficient.

Nimbalyst is one way to support this process well.

Related posts

-

Parallel Claude Code Agents: What Still Breaks

Parallel agents with git worktrees still break in five ways, even after Claude Code desktop's April 14 redesign shipped the feature widely.

-

Best Vibe Kanban Alternatives in 2026

Looking for a Vibe Kanban alternative? The best replacements in 2026 for multi-agent coding, git worktrees, planning, and session orchestration.

-

Vibe Kanban After Bloop: What Happens to Users, and Where to Go

Bloop is shutting down and Vibe Kanban transitions to community-maintained open source. What current users should do this week, and how the multi-agent kanban category looks now.