The Bugs AI Writes: 5 Patterns to Watch For

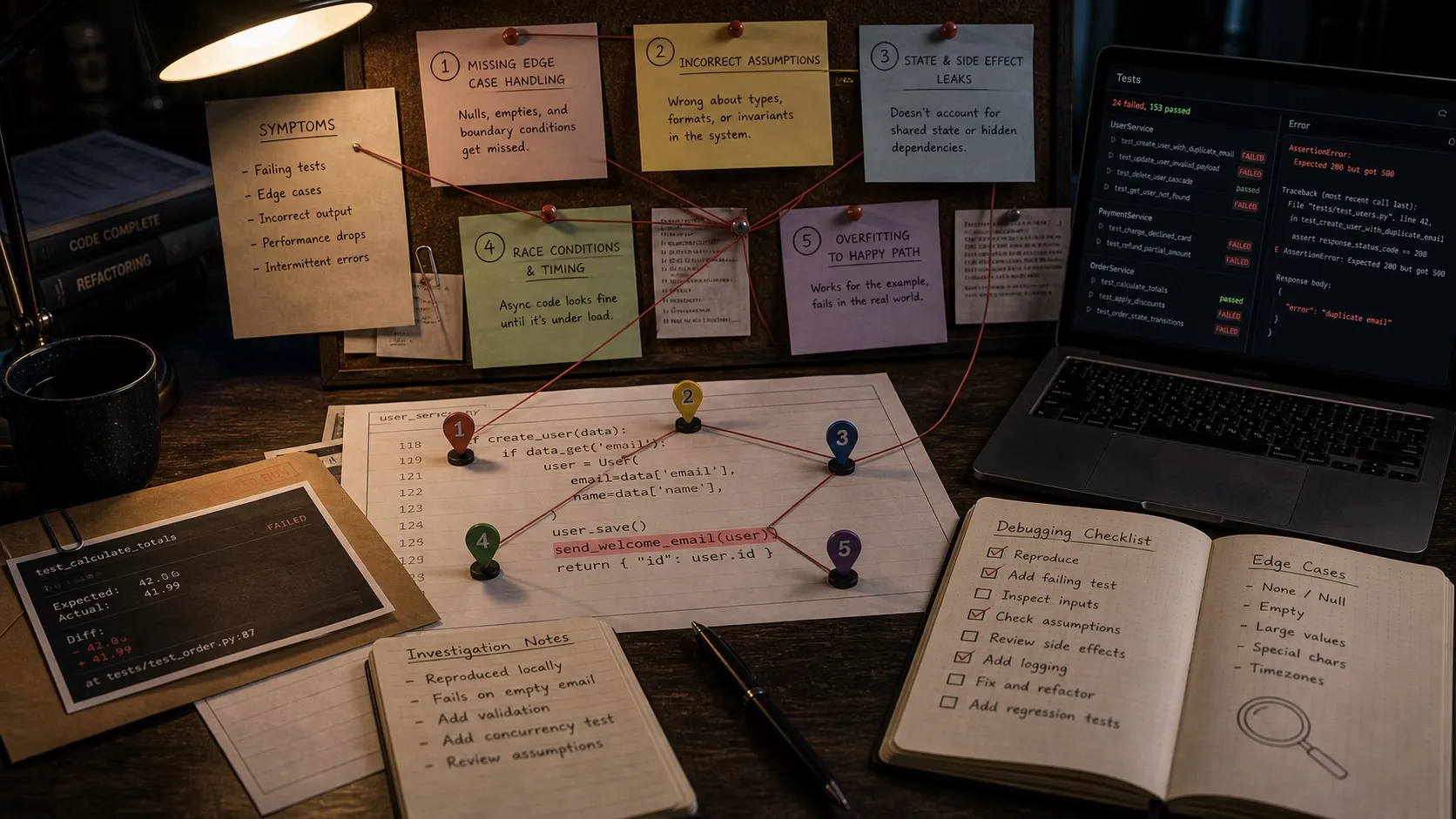

The bug patterns AI agents produce are consistent enough to categorize. Five specific patterns, how to catch them, and why AI code review is a different skill.

Reviewing AI-generated code has quietly become one of the most time-consuming parts of modern software development. As AI coding tools move from autocomplete to autonomous agents, developers are spending more of their day reading diffs they didn’t write.

VentureBeat recently reported that 43% of AI-generated code changes need debugging in production. ByteIota found AI-generated code produces 1.7x more issues per pull request than human-written code. And 60% of AI code faults are “silent failures,” code that compiles, passes tests, and looks correct but produces wrong results.

The stats alone aren’t useful unless you know what to look for. Across thousands of AI-generated diffs, the bug patterns are consistent enough to categorize.

Pattern 1: Plausible but wrong logic

This is the most common and hardest to catch. The AI writes code that looks correct at first glance and often passes basic tests but handles edge cases incorrectly.

A typical example: an agent writes a function to parse dates from user input. It handles the common formats fine. But it silently converts ambiguous dates like “04/05/2026” using US formatting (April 5th) when the codebase convention is ISO 8601. No error, no crash, just wrong data flowing through the system.

The pattern is that AI agents optimize for the happy path. They’ll write code that works for the test cases you’d think to write, but miss the implicit conventions and constraints that a developer who’s lived in the codebase would know.

How to catch it: Review AI code the way you’d review code from a smart contractor who just joined the team. They write clean code but don’t know your conventions. Check for assumptions about data formats, timezone handling, null and undefined behavior, and anything involving implicit business rules.

Pattern 2: Confident refactoring that breaks callers

When an AI agent refactors a module, it tends to make the module internally cleaner while subtly changing the contract with the rest of the system. This shows up as renamed parameters, changed return types (returning undefined where it used to throw), and modified default behaviors.

The agent’s changes to the refactored module look great in isolation. The problem is three files away, where some other code depended on the old behavior. TypeScript catches the obvious ones (renamed exports, changed signatures). It doesn’t catch behavioral changes.

How to catch it: When reviewing a refactor, don’t just look at the files the agent changed. Search the codebase for every caller of the refactored interface. Pay special attention to error handling paths and default values. If the agent says “simplified the return type,” that’s a flag to check whether any caller handled the complexity the agent removed.

Pattern 3: Test suites that test the implementation, not the behavior

AI agents write tests that pass. That’s the problem: they’re too good at it. They’ll write tests that mirror the implementation exactly, making them pass by construction rather than by correctness.

A common example: tests where the expected value is literally copied from the function’s return value rather than independently calculated. The test will always pass, and it will never catch a regression, because if the function changes, the test expectation is wrong in the same way.

Another variant: the agent mocks everything so thoroughly that the test runs entirely against fake data. It validates that the mocking framework works, not that the code works.

How to catch it: For any test the agent writes, ask: “Would this test fail if the function returned a hardcoded value?” If the answer is no, the test is useless. Favor integration tests over unit tests for AI-generated code. Real database calls, real API responses, real file system operations. Mocks should be the exception, not the default.

Pattern 4: Copy-paste drift across similar components

When an agent creates multiple similar components (API endpoints, UI elements, data processing pipelines), it copies patterns from the first one. But it doesn’t always copy correctly. Small variations creep in: one endpoint validates input while another doesn’t, one component handles loading states while its sibling doesn’t, one pipeline logs errors while the parallel one silently swallows them.

This is especially insidious because each component in isolation looks fine. The inconsistency only becomes visible when you compare them side by side.

How to catch it: When an agent creates multiple similar things, literally diff them against each other. Any difference should be intentional. If you find inconsistencies, it’s usually a sign that the underlying pattern should be extracted into a shared abstraction, which you should add to the spec for the next task rather than asking the agent to fix it ad hoc.

Pattern 5: Dependency and import sprawl

AI agents love installing packages. An agent asked to add a date picker will pull in a full date library even when the project already has one. Asked to format a string, it’ll import lodash for a single function that’s a one-liner in vanilla JavaScript.

Over time, this creates dependency bloat. But the subtler issue is duplicate functionality: two date libraries with slightly different APIs being used in different parts of the codebase, leading to inconsistent behavior and larger bundle sizes.

How to catch it: Review imports carefully in AI-generated code. If the agent added a new dependency, check whether the project already has a library that does the same thing. Add a section to your CLAUDE.md file listing preferred libraries for common tasks so the agent knows what’s already available.

The review process that works for AI code

Traditional code review assumes a human author who understands context, follows conventions, and makes intentional tradeoffs. AI code review requires different assumptions:

Assume no institutional knowledge. The agent doesn’t know your team’s conventions unless they’re documented. Every assumption it makes is based on what was in its context window, not on six months of team meetings.

Review the boundaries, not the internals. AI-generated internal logic is usually fine. The bugs live at the interfaces: function signatures, API contracts, error handling, data formats. Focus your review time there.

Test behavior, not implementation. Run the code. Click through the UI. Send real API requests. AI code that looks clean in a diff can behave unexpectedly under real conditions.

Check what the agent didn’t change. Some of the worst bugs come from what the agent left alone. If it added a new feature to a module, check whether the existing error handling, logging, and documentation still apply to the new code path.

Use visual diffs. When you’re reviewing 200 lines of AI changes across four files, a line-by-line text diff makes it hard to see the big picture. Color-coded visual diffs that show which files changed, how much changed, and what the before and after look like make pattern recognition faster.

Why this matters more as AI coding scales

If you’re doing agentic coding with multiple agents working in parallel (which is increasingly common in 2026), the review challenge multiplies. Three agents producing 200 lines each means you’re reviewing 600 lines of code written by entities with no communication between them and no shared understanding of the codebase beyond what was in each agent’s context window.

The quality numbers reflect this. That 43% debugging rate isn’t because AI writes bad code. It’s because the review and testing workflows designed for human-authored code don’t catch the specific bug patterns AI produces. Traditional PR review is optimized for catching the mistakes humans make (logic errors, forgotten edge cases, typos). AI makes different mistakes.

Teams that handle this well do three things:

-

They document everything. Not just code comments, but architecture decisions, data format conventions, preferred libraries, and business rules. All of it goes into files the agent reads (CLAUDE.md, spec docs, architecture diagrams). More documented context means fewer “plausible but wrong” bugs.

-

They scope tasks tightly. A 30-minute agent task that touches three files is reviewable. A two-hour agent task that touches twenty files is a coin flip. Smaller tasks, more frequently reviewed.

-

They treat review as a first-class activity. Not something you rush through between meetings. Dedicated time, good tooling, and the discipline to reject work that doesn’t meet standards, even when it “mostly works.”

AI-generated code isn’t going away, and the volume will only increase. The developers and teams who invest in understanding the specific bug patterns it produces, and build review workflows around those patterns, will ship more reliably than the ones who treat AI code review the same as human code review.

The code quality bar doesn’t change just because the author isn’t human. If anything, it needs to be higher, because the failure modes are less familiar.

Related posts

-

Best Claude Code Skills in 2026 (Ranked)

The best Claude Code skills in 2026, ranked by day to day usefulness: code review, test writing, parallel batch work, debugging, release flows, and one visual bundle from Nimbalyst.

-

Claude Code Plugins: A 2026 Guide

Claude Code plugins explained: what they are, how they extend the agent, and how plugins relate to MCP servers, skills, and Nimbalyst extensions.

-

Claude Code Skills: A Practical 2026 Guide

Claude Code skills explained: what they are, how to write one, the best built-in skills, and how skills differ from MCP servers and subagents.