What is OpenCode? The 2026 Beginner's Guide

OpenCode is the open-source, model-agnostic AI coding agent. Architecture, supported models, install, and how it compares to Claude Code and Codex.

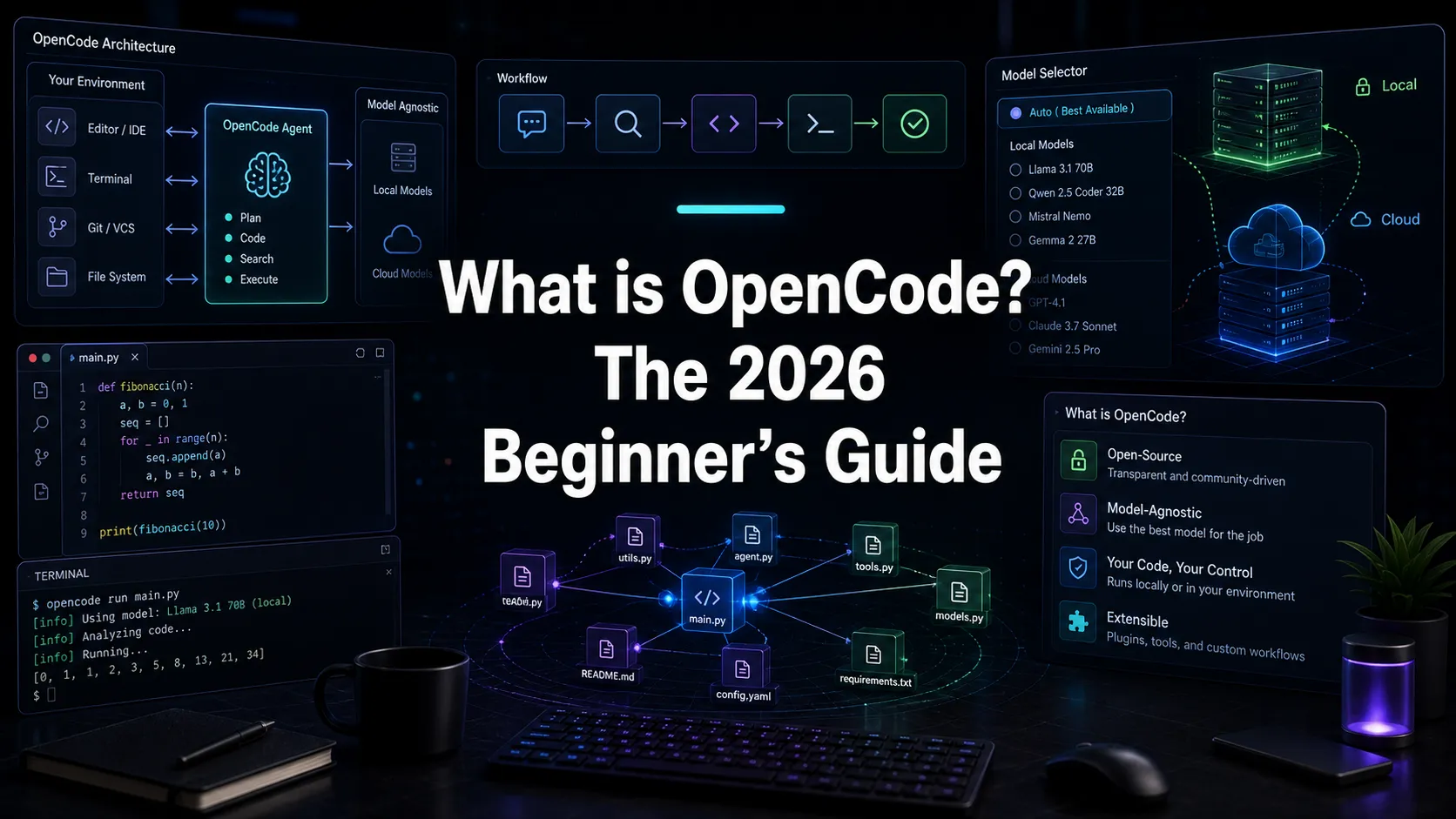

OpenCode is an open-source, model-agnostic AI coding agent that runs in your terminal, desktop, or IDE and can drive over 75 AI providers including Anthropic, OpenAI, Google, and local models via Ollama. It is one of the most prominent open AI coding agents in 2026, alongside Claude Code and Codex. This guide covers what OpenCode actually is, how its architecture works, the models it supports, and why it has crossed 150,000 GitHub stars.

OpenCode in one paragraph

OpenCode is a coding agent you run on your own machine that talks to whatever model you choose. The MIT-licensed core is a Go TUI plus a Bun and JavaScript HTTP server, which means you can drive it from a terminal, a desktop app, a VS Code extension, or any HTTP client. It maintains git-based snapshots, supports /undo and /redo, and uses Language Server Protocol diagnostics to feed compiler errors back to the model after edits. It is not a wrapper around Claude or GPT. It is the harness, and you bring the brain.

Quick Answer

- What is OpenCode? A free, MIT-licensed AI coding agent that runs on your machine and supports 75+ model providers.

- Who maintains OpenCode? An open-source project led by Anomaly with 150,000+ GitHub stars and active releases.

- What models does OpenCode support? Anthropic Claude, OpenAI GPT, Google Gemini, DeepSeek, Groq, plus local models through Ollama and LM Studio. The full list is in Models.dev.

- What is OpenCode’s architecture? A client-server design. Go-based TUI plus a Bun and JavaScript HTTP server. Multiple frontends can connect to the same server.

- How does OpenCode compare to Claude Code? OpenCode is model-agnostic and open source. Claude Code is locked to Claude models. OpenCode is roughly 78 percent slower than Claude Code on identical tasks (Builder.io’s tests with Claude Sonnet 4.5) but produces more thorough output.

- Is OpenCode free? Yes. The tool is free. You pay model providers for inference, or you run a local model for $0.

How OpenCode is built

OpenCode’s architecture is the most important thing to understand about it. The project is split into:

- A TUI in Go, written with Bubble Tea. This is the terminal interface most users see.

- An HTTP server in JavaScript and Bun, using the Hono framework. The server handles model calls, tool execution, and state management.

- A protocol layer between the TUI and the server, which means anything that can speak HTTP can become an OpenCode frontend. Desktop apps, browser extensions, and IDE plugins all use the same surface.

This is fundamentally different from Claude Code, which is a single TypeScript process, and from Codex, which mixes a local CLI/app experience with OpenAI-managed cloud surfaces. OpenCode’s split architecture trades some integration polish for flexibility: you can run the server in one place and the client in another, you can build alternative clients, and you can hack on either layer without touching the other.

What OpenCode does well

Model agnosticism

The headline feature. OpenCode supports any model that exposes an OpenAI-compatible or Anthropic-compatible API, plus local models through Ollama and LM Studio. The full registry lives at Models.dev, which currently lists 75 plus providers. Switch from Claude Sonnet to a GPT-5 Codex-family model to a 14-billion-parameter local model in one config change.

Local models for privacy-sensitive work

Because OpenCode supports Ollama and LM Studio out of the box, you can run the entire agent loop without any data leaving your machine. For regulated industries, classified work, or developers who simply do not want their code on a vendor’s servers, this is decisive. Claude Code and Codex cannot match it.

LSP diagnostics in the loop

OpenCode spawns Language Server Protocol servers and feeds compiler diagnostics back to the model after every edit. If the agent introduces a TypeScript type error, the next round of context includes the error and the model can self-correct. This is unique to OpenCode in 2026. Claude Code and Codex do not feed LSP diagnostics into the loop by default.

Git-based undo and redo

OpenCode snapshots your repo before every meaningful change. Run /undo to roll back. Run /redo to reapply. This is more aggressive than Claude Code’s permission model. Claude Code asks you before edits. OpenCode lets the agent edit, then trusts you to roll back if needed.

Open source you can actually fork

The whole project is MIT licensed. You can read the code, file issues, build extensions, or fork it. The community has shipped alternative TUIs, custom agents, and dozens of integrations. If a feature is missing, you can build it.

Where OpenCode struggles

Speed

Builder.io ran a head-to-head test using Claude Sonnet 4.5 on identical tasks. Total session time: Claude Code 9 minutes 9 seconds, OpenCode 16 minutes 20 seconds. OpenCode was 78 percent slower. Some of the gap is the LSP loop, some is the client-server overhead, and some is OpenCode’s tendency to be more thorough (it wrote 94 tests where Claude Code wrote 73). Faster is not always better, but if speed matters for you, this is the trade.

Local model tool calling

OpenCode supports local models, but tool calling on smaller open-weight models is hit or miss. The 70B-class models are usable. Below that, expect the agent to hallucinate tool arguments or skip tools entirely. If you want full local privacy, plan to run a serious model.

Configuration overhead

Model agnosticism comes with a tax. Every provider has its own auth flow, rate limits, and quirks. Claude Code has one configuration path. Codex bundles with ChatGPT. OpenCode wants you to wire up whichever providers you use, manage keys, and tune per-model settings. Not hard, but not zero.

Who OpenCode is for

OpenCode is the right pick when:

- You want to avoid vendor lock-in.

- You need fully local execution for privacy or compliance.

- You want LSP diagnostics in the agent loop.

- You want to read, fork, or extend the source.

- Your budget is tight enough that bundled subscriptions matter less than per-token economics.

OpenCode is the wrong pick when you want the fastest path to value (Claude Code), the deepest GitHub and ChatGPT integration (Codex), or a polished commercial product with first-party support.

OpenCode and Nimbalyst

Nimbalyst is the open-source visual workspace for building with Codex, Claude Code, and more. OpenCode support is on the roadmap. When it ships, you will be able to run OpenCode sessions in the same workspace alongside Claude Code and Codex, with kanban session management, automatic git worktree isolation, and inline diff review across markdown, mockups, diagrams, and code.

Frequently Asked Questions

Is OpenCode the same as OpenAI Codex?

No. OpenCode and Codex are different projects. Codex is OpenAI’s official AI coding agent and uses OpenAI’s codex-optimized GPT-5 model family. OpenCode is an open-source agent that supports 75 plus providers including OpenAI’s models, Anthropic, Google, DeepSeek, and local models. The naming is unfortunately similar.

How do I install OpenCode?

The fastest install on macOS or Linux is curl -fsSL https://opencode.ai/install | bash. On Windows, follow the manual install in the docs. After install, run opencode auth login for whichever provider you want and opencode to start the TUI. The OpenCode docs cover the full setup.

Is OpenCode better than Claude Code?

OpenCode and Claude Code solve different problems. Claude Code is faster, more polished, and locked to Claude. OpenCode is open source, model-agnostic, and supports local models. For a head-to-head, see OpenCode vs Claude Code or the broader Claude Code vs Codex vs OpenCode comparison.

Does OpenCode work on Windows?

Yes. OpenCode runs on macOS, Linux, and Windows. The TUI is built in Go so it cross-compiles cleanly. Local model support through Ollama works on all three platforms.

Can OpenCode run fully offline?

Yes, with a local model. Run Ollama or LM Studio with a tool-calling-capable model (70B class works best), point OpenCode at it, and the entire session runs without any external API calls. This is the most private setup in the AI coding space in 2026.

Is OpenCode safe to run on my codebase?

OpenCode takes git-based snapshots before changes and exposes /undo and /redo. It also supports per-agent tool permissions. It is no more or less safe than Claude Code or Codex. The standard advice applies: run agents in a clean working tree, on a feature branch or git worktree, and review diffs before merging.

Related Reading

Related posts

-

Claude Code Plugins: A 2026 Guide

Claude Code plugins explained: what they are, how they extend the agent, and how plugins relate to MCP servers, skills, and Nimbalyst extensions.

-

Claude Code Skills: A Practical 2026 Guide

Claude Code skills explained: what they are, how to write one, the best built-in skills, and how skills differ from MCP servers and subagents.

-

Claude Code Subagents: A Practical 2026 Guide

Claude Code subagents explained: what they are, when to use them, how to configure them, and how parallel subagents change the agent loop.