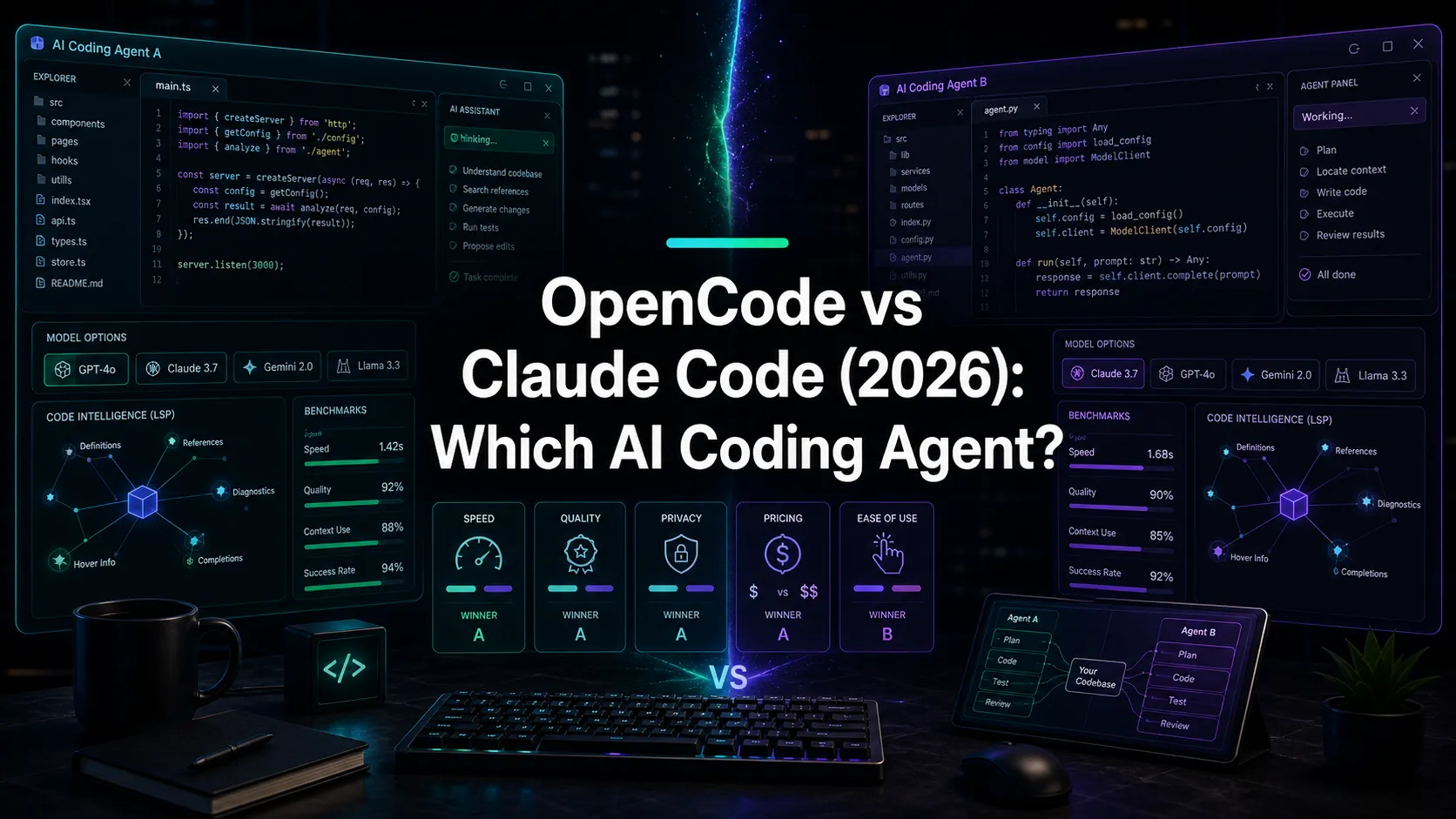

OpenCode vs Claude Code (2026): Which AI Coding Agent?

OpenCode vs Claude Code in 2026: speed, model choice, LSP, privacy, and pricing compared. The decisive head-to-head between the two AI coding agents.

OpenCode vs Claude Code is the comparison that matters most for developers picking an open-source AI coding agent in 2026. OpenCode is community-led, MIT-licensed, and supports 75 plus model providers. Claude Code is built by Anthropic, locked to Claude models, and optimized end-to-end. They take fundamentally different bets on how you should work with AI. This guide compares them on architecture, speed, models, safety, and pricing, with verified data only.

OpenCode vs Claude Code: Quick Verdict

| Dimension | OpenCode | Claude Code |

|---|---|---|

| License | MIT, fully open source | Closed source (Anthropic) |

| Models | 75+ providers, including local via Ollama | Claude only (Opus, Sonnet, Haiku) |

| Speed (same model) | 78% slower than Claude Code | Baseline |

| LSP diagnostics in loop | Yes | No |

| Snapshots and undo | Yes (/undo, /redo) | Permission-prompts before edits |

| Architecture | Go TUI + Bun/JS HTTP server | TypeScript single process |

| GitHub and external integrations | Community or MCP-based | Stronger first-party docs and MCP ecosystem |

| Default install | curl ... | bash | npm install -g @anthropic-ai/claude-code |

| Pricing | Free tool. Pay providers separately. | Pro $20, Max $100 to $200 per month |

Pick OpenCode when you want full model choice, fully local privacy with Ollama, an open-source codebase you can fork, or LSP-driven self-correction.

Pick Claude Code when you want the fastest path to value, the highest raw model quality on complex reasoning, or you are already in the Anthropic ecosystem.

Architecture

OpenCode and Claude Code make opposite architectural bets.

Claude Code is a single TypeScript process. The harness, the CLI, and the model orchestration all live together. There is one main loop that calls Claude with tool definitions, executes the tools, and feeds the results back. This is deliberately simple. Anthropic optimizes the harness for the specific quirks of its own models, which is part of why Claude Code is fast. The trade-off is rigidity. You cannot swap the model. You cannot fork the harness. You get what Anthropic ships.

OpenCode is a client-server design. The Go-based TUI talks HTTP to a Bun and JavaScript server. The server handles model calls and tool execution. Anything that speaks HTTP can be a frontend. This buys flexibility (alternative TUIs, desktop apps, IDE extensions) but adds latency. Every tool call has a network hop, even on localhost. That is part of the speed gap.

Neither is wrong. They optimize for different constraints. Claude Code optimizes for speed inside one model family. OpenCode optimizes for flexibility across many.

Models

OpenCode supports 75 plus providers via Models.dev. The current list includes Anthropic Claude, OpenAI GPT, Google Gemini, DeepSeek, Groq, plus local models through Ollama and LM Studio. You can switch between them with one config change.

Claude Code supports Claude. That is the entire list. Opus 4.6, Sonnet 4.6, and Haiku 4.5 cover the spectrum from highest reasoning quality to lowest latency, but you cannot use GPT, Gemini, or a local model.

The decisive question is whether you value model choice. If your team is tied to a particular vendor’s pricing or has a preferred provider, OpenCode wins. If you trust Anthropic to ship the best Claude experience, Claude Code wins.

Speed

Builder.io ran a head-to-head test in early 2026 using Claude Sonnet 4.5 on identical tasks across both tools.

| Task | Claude Code | OpenCode |

|---|---|---|

| Cross-file rename | 3m 6s | 3m 13s |

| Bug fix | ~40s | ~40s |

| Test writing | 73 tests in 3m 12s | 94 tests in 9m 11s |

| Total session | 9m 9s | 16m 20s |

OpenCode took 78 percent longer overall. Some of that is the client-server overhead. Some is the LSP loop, which adds time but catches bugs the model would otherwise miss. Some is OpenCode being more thorough (it wrote 21 more tests). The gap is real, but it is not pure waste.

If your work is high-volume and time-sensitive, Claude Code’s speed advantage matters. If your work involves correctness over throughput, OpenCode’s thoroughness can pay back.

Safety and undo

Claude Code defaults to read-only and asks before any destructive operation. You see every command, every file edit, every shell call before it runs. Conservative by design.

OpenCode flips this. The agent can edit and run, and you roll back with /undo if something went wrong. Git-based snapshots make rollback fast and reliable. This is faster in practice but requires more trust.

Both approaches are reasonable. Claude Code suits developers who want explicit control. OpenCode suits developers who want the agent to make progress and rely on git for safety.

LSP diagnostics

OpenCode spawns Language Server Protocol servers and feeds compiler diagnostics back to the model after every edit. If the agent introduces a TypeScript type error, the next round includes the error and the model self-corrects. This is unique to OpenCode in 2026.

Claude Code does not feed LSP diagnostics into the loop by default. The agent’s primary signal is whatever output the test command produces. For typed languages, OpenCode’s LSP loop is a meaningful advantage.

Pricing

Claude Code costs $20 per month for Pro, $100 for Max 5x, and $200 for Max 20x. Most working developers land at $100 to $200 per month for serious use. Anthropic’s API directly is also an option, with average usage around $6 per day on Sonnet 4.6.

OpenCode is free as a tool. You pay model providers. Costs span from $0 (Ollama with a local model) to $200-plus per month (heavy use of Claude Opus or GPT-Codex). Power users on bundled ChatGPT or Claude subscriptions pay nothing extra.

For a solo developer who only needs Claude, Claude Code Pro at $20 per month is the simplest path. For a team that wants to mix providers or run local models, OpenCode is cheaper at scale.

GitHub and integrations

Claude Code has well-documented support for MCP, plus first-party docs for plugins, skills, hooks, subagents, and Remote Control. If you want a more opinionated ecosystem around one agent, that maturity matters.

OpenCode supports MCP too, and its docs now cover plugins, agent skills, custom tools, and LSP servers. The trade-off is still polish: you get more freedom, but the exact GitHub or workflow experience depends on which providers and community components you choose to install.

Who should pick which

Pick OpenCode if any of these apply:

- You need to avoid vendor lock-in.

- You need fully local execution for privacy or compliance.

- You want LSP diagnostics fed back to the model.

- You want to read, fork, or extend the source.

- You are running multiple model providers and want one harness.

Pick Claude Code if any of these apply:

- You want the fastest, smoothest experience out of the box.

- You are already paying for Claude Pro, Max, or Anthropic API access.

- You need the highest raw model quality on hard reasoning tasks.

- You want the most mature Claude-specific ecosystem around MCP, skills, plugins, and Remote Control.

- You only need one model and want one tool to learn.

OpenCode vs Claude Code in a visual workspace

If you do not want to choose between them, you do not have to. Nimbalyst is the open-source visual workspace for building with Codex, Claude Code, and more, with OpenCode support on the roadmap. You can run agent sessions side by side, compare their output on the same task, and review every diff inline across markdown, mockups, diagrams, and code. The agent harness becomes a choice per session, not a lock-in.

Frequently Asked Questions

Is OpenCode better than Claude Code?

Neither is universally better. OpenCode wins on openness, model choice, and LSP-aware self-correction. Claude Code wins on speed, polish, and raw model quality on complex reasoning. The right pick depends on whether you value flexibility (OpenCode) or end-to-end optimization with one model family (Claude Code).

Can I use OpenCode with Claude models?

Yes. OpenCode supports Claude Opus, Sonnet, and Haiku through the Anthropic API. Bring your Anthropic API key, run opencode auth login, and select the Claude model you want. You get OpenCode’s harness with Claude’s intelligence.

Is Claude Code open source?

No. Claude Code is closed source. Anthropic distributes the binary, but you cannot read or modify the source. OpenCode is MIT licensed with the full source on GitHub.

Which is faster, OpenCode or Claude Code?

Claude Code is faster. Builder.io’s tests on identical tasks with the same model showed Claude Code completing a sample session in 9 minutes 9 seconds versus OpenCode’s 16 minutes 20 seconds. The gap is roughly 78 percent. Some of the difference reflects OpenCode being more thorough (more tests written). Most reflects the client-server architecture and the LSP feedback loop.

Can I run OpenCode and Claude Code on the same project?

Yes. Both are compatible with any git repo. Many developers use both: Claude Code for fast interactive work and OpenCode for privacy-sensitive tasks or for experimenting with non-Claude models. With a tool like Nimbalyst, you can run both side by side in the same workspace once OpenCode support ships.

Related Reading

Related posts

-

Claude Code Plugins: A 2026 Guide

Claude Code plugins explained: what they are, how they extend the agent, and how plugins relate to MCP servers, skills, and Nimbalyst extensions.

-

Claude Code Skills: A Practical 2026 Guide

Claude Code skills explained: what they are, how to write one, the best built-in skills, and how skills differ from MCP servers and subagents.

-

Claude Code Subagents: A Practical 2026 Guide

Claude Code subagents explained: what they are, when to use them, how to configure them, and how parallel subagents change the agent loop.