Developer

AI-guided TDD

Run /tdd and the agent guides you through red-green-refactor cycles. Write failing tests, implement minimal code to pass, then refactor — with AI assistance at each step.

Run a test-driven development cycle — write a failing test, implement to make it pass, then refactor. The agent guides each phase of the red-green-refactor loop.

Capabilities

Test first, build right

Red: Write failing tests

The agent generates test cases from your feature description. Tests fail initially — confirming the feature doesn't exist yet.

Green: Make them pass

Minimal implementation to make all tests pass. No over-engineering, no premature optimization — just enough code.

Refactor: Clean up

With passing tests as a safety net, refactor the implementation for readability and maintainability.

How It Works

How /tdd works

Type /tdd

Run the command and describe the feature or behavior you want to implement.

Red phase

The agent writes test cases that describe the desired behavior. Tests are run and confirmed to fail.

Green + Refactor

The agent implements the minimum code to pass, then refactors for quality. All tests stay green throughout.

Try It

Example prompts

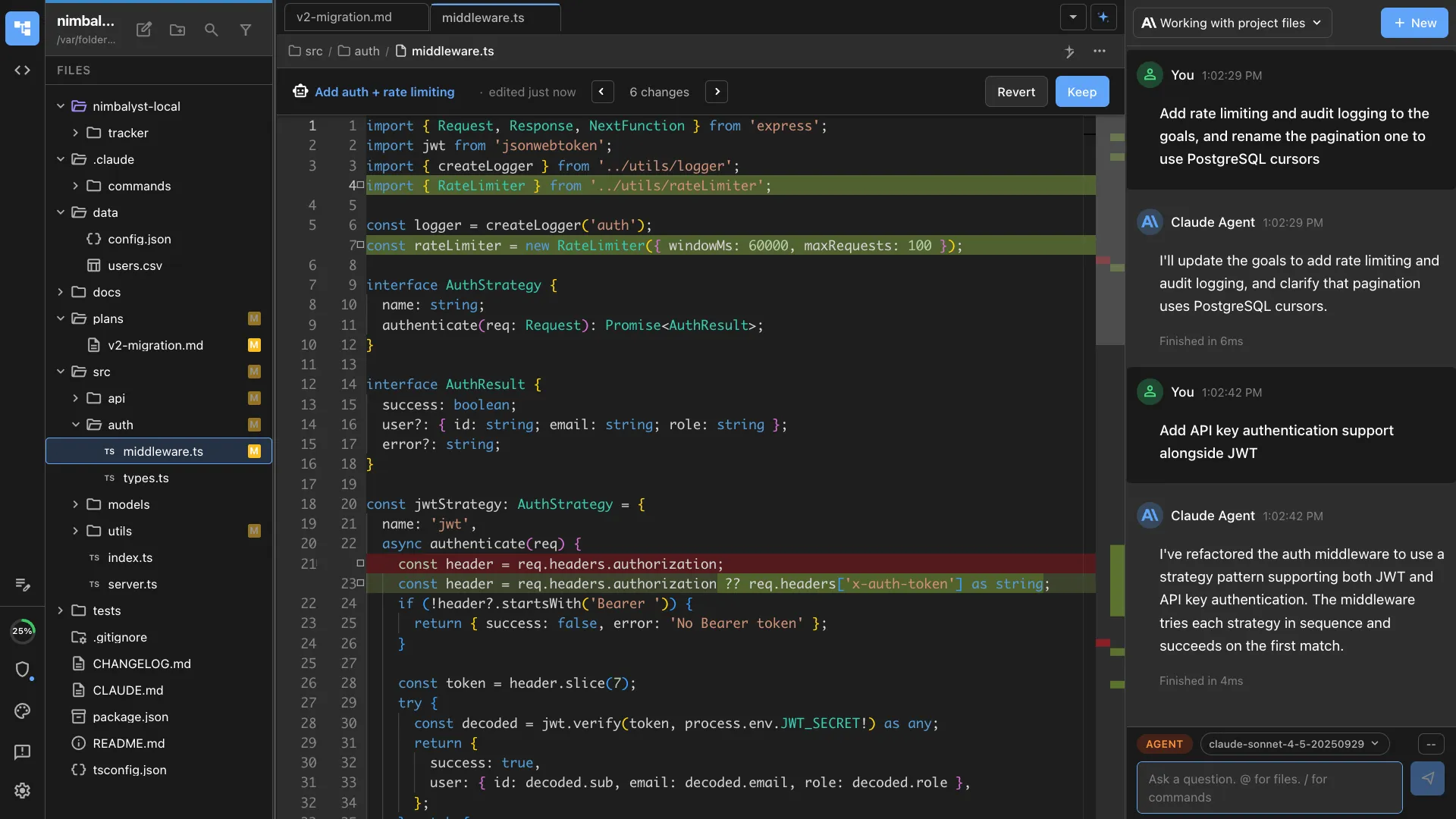

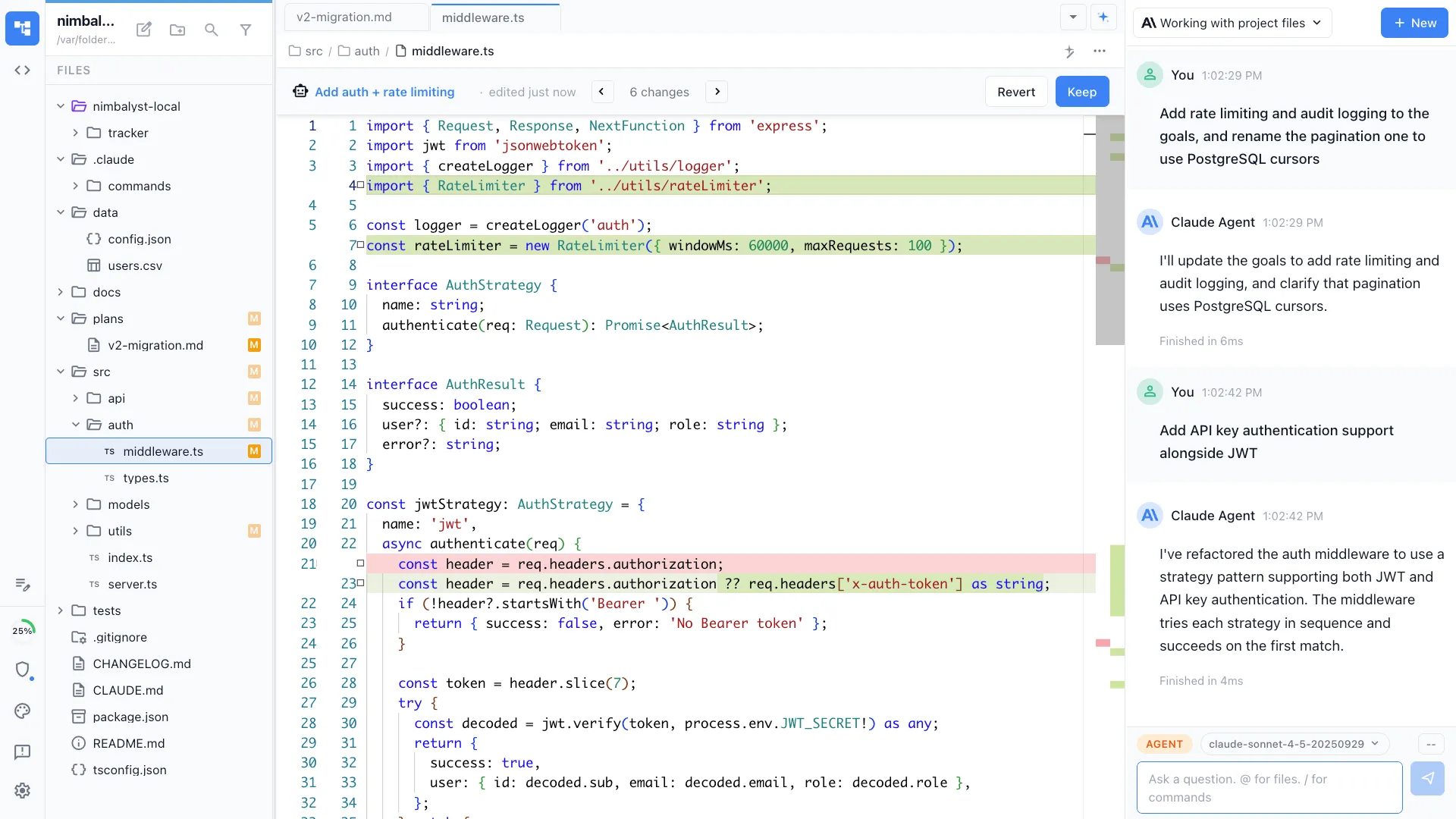

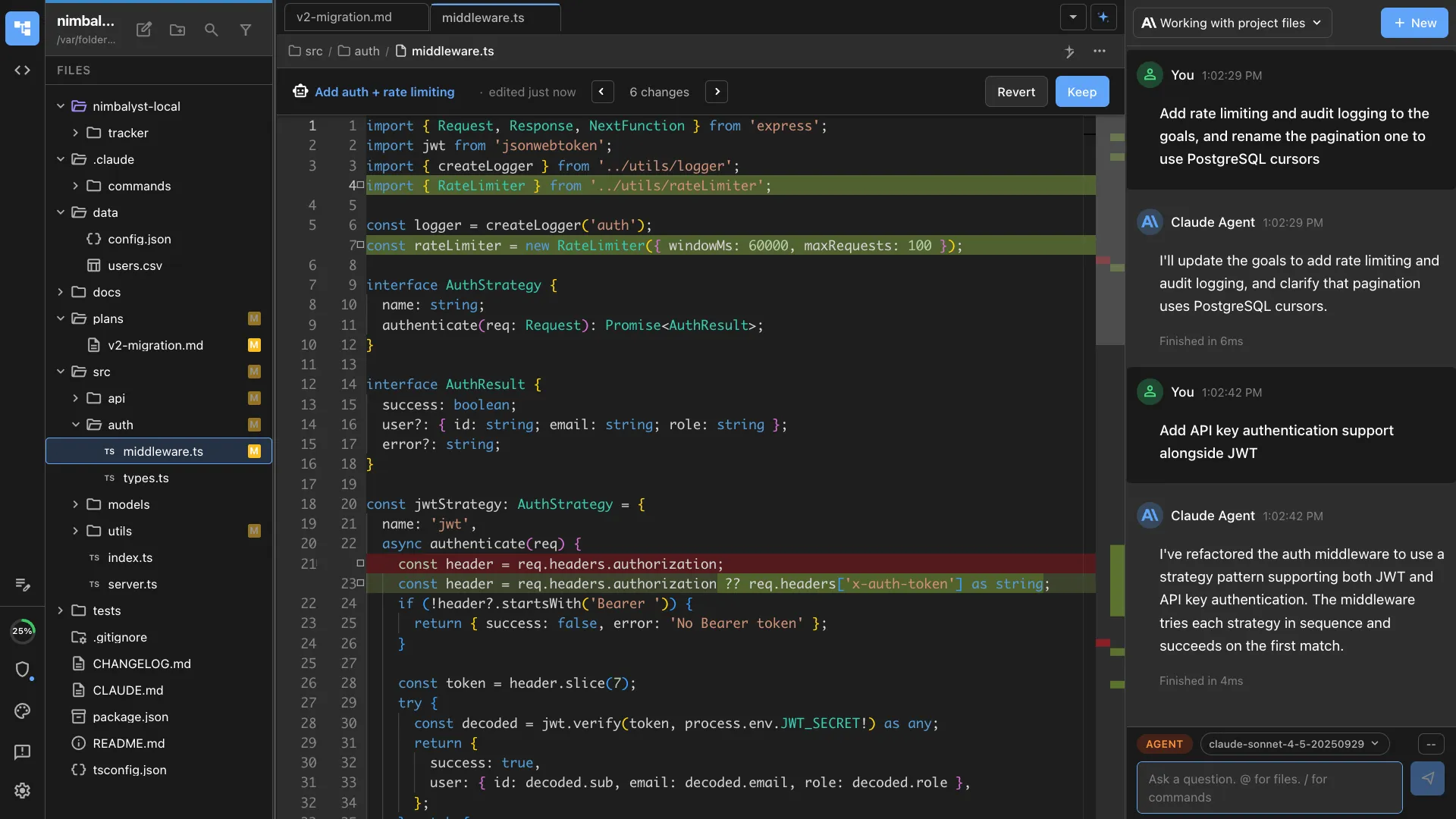

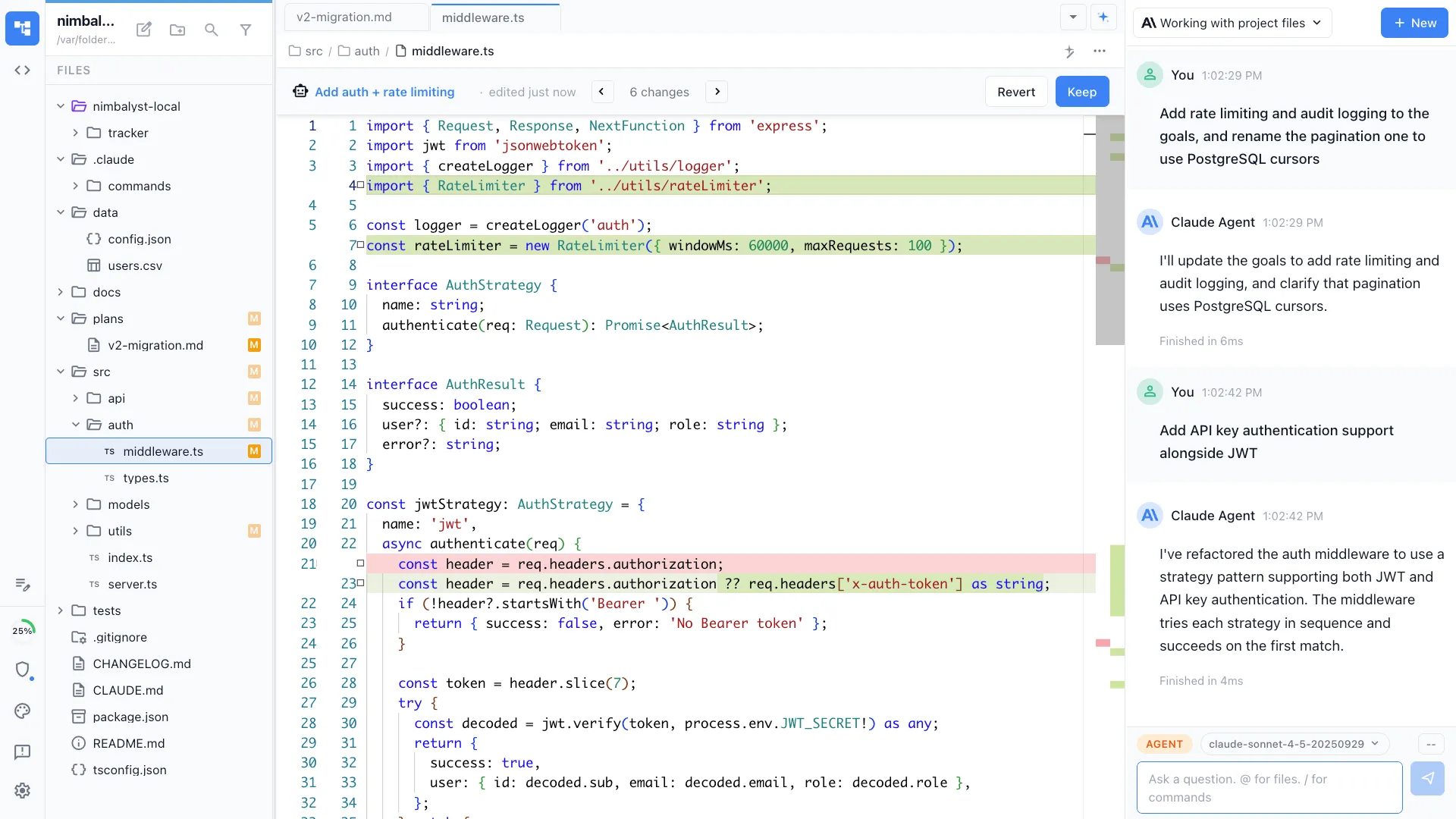

/tdd implement a rate limiter with configurable windows /tdd add input validation to the user registration form /tdd TDD the search functionality for the API Full Skill Source

Use this skill in your project

Copy the full text below or download it as a markdown file. Place it in your project's .claude/commands/ directory to use it as a slash command.

---

name: tdd-cycle

description: Drive test-driven development through the red/green/refactor cycle. Use when implementing features test-first, building with high confidence, or learning a codebase through its test suite.

allowed-tools: ["Read", "Edit", "Write", "Bash", "Glob", "Grep"]

---

# /tdd

Guide implementation through the test-driven development cycle: write a failing test, make it pass with minimal code, then refactor.

## What This Command Does

1. Accepts a feature description or requirement

2. Breaks it into testable behaviors

3. Iterates through the red/green/refactor cycle for each behavior

4. Builds up the implementation incrementally with full test coverage

5. Produces clean, well-tested code with a matching test suite

## Usage

```

/tdd [feature description or requirement]

```

**Examples:**

- `/tdd "User can register with email and password"`

- `/tdd "Calculate shipping cost based on weight and destination"`

- `/tdd "Parse CSV files with support for quoted fields and escaped commas"`

- `/tdd src/services/billing.ts "Add proration logic for plan upgrades"`

## Execution Steps

1. **Understand the requirement**

Read the provided feature description and decompose it into discrete, testable behaviors. Each behavior should be small enough to implement in a single red/green/refactor cycle.

**Example decomposition:**

```

Feature: "User can register with email and password"

Behaviors:

1. Accepts valid email and password, returns user object

2. Rejects registration if email is already taken

3. Rejects registration if email format is invalid

4. Rejects registration if password is too short (< 8 chars)

5. Hashes password before storing (never stores plaintext)

6. Returns appropriate error messages for each failure case

```

Order behaviors from simplest to most complex. Start with the "happy path" and add edge cases progressively.

2. **Set up the test file**

- Determine the test framework in use (Jest, Vitest, Mocha, pytest, etc.) by checking `package.json`, config files, or existing test patterns

- Create or locate the test file following the project's conventions

- Write the initial `describe` block with the feature name

- Import any necessary test utilities

3. **For each behavior, run the RED/GREEN/REFACTOR cycle:**

### RED Phase: Write a Failing Test

Write exactly ONE test that describes the next behavior:

```typescript

it('should [expected behavior] when [condition]', () => {

// Arrange: Set up the inputs and preconditions

// Act: Call the function or trigger the behavior

// Assert: Verify the expected outcome

});

```

**Test writing rules:**

- Test name clearly describes what is expected and under what conditions

- Each test tests ONE thing

- Use the Arrange/Act/Assert pattern

- Make the assertion specific: test exact values, not just truthiness

- Include the minimum setup needed; no extra context

**Run the test** to confirm it FAILS:

```bash

npm test -- --testPathPattern="[test-file]" --verbose

```

If the test passes without writing implementation code, either:

- The behavior is already implemented (skip to next behavior)

- The test is not actually testing what it claims (fix the test)

### GREEN Phase: Write Minimal Code to Pass

Write the absolute minimum implementation code to make the failing test pass:

- Do NOT write more code than needed to pass the current test

- Do NOT add error handling for cases not yet tested

- Do NOT optimize or abstract; that comes in the refactor phase

- Hard-coded return values are acceptable if they make the test pass (the next test will force generalization)

**Run the test** to confirm it PASSES:

```bash

npm test -- --testPathPattern="[test-file]" --verbose

```

If the test still fails, fix the implementation. Do not modify the test during the green phase unless the test had a genuine bug.

Also run ALL tests to check for regressions:

```bash

npm test

```

### REFACTOR Phase: Clean Up

With all tests green, improve the code without changing behavior:

- Remove duplication between test cases (extract test helpers)

- Remove duplication in implementation code (extract functions)

- Improve naming (variables, functions, test descriptions)

- Simplify complex conditionals

- Do NOT add new functionality during refactoring

**Run ALL tests** after refactoring to confirm nothing broke:

```bash

npm test

```

If any test fails during refactoring, revert the refactoring change immediately.

4. **Repeat for each behavior**

After completing one full cycle, move to the next behavior in the list. Continue until all behaviors are implemented and tested.

5. **Final review**

After all behaviors are implemented:

- Run the full test suite one final time

- Review the test file for clarity and completeness

- Review the implementation for any remaining cleanup

- Check test coverage if the project has coverage tools configured:

```bash

npm test -- --coverage --testPathPattern="[test-file]"

```

6. **Generate the TDD session summary**

## Output Format

After completing all cycles, provide this summary:

```markdown

## TDD Session Summary

**Feature**: [Feature description]

**Test File**: [path to test file]

**Implementation File**: [path to implementation file]

**Cycles Completed**: [count]

### Behaviors Implemented

| # | Behavior | Tests | Status |

|---|----------|-------|--------|

| 1 | [Behavior description] | [test count] | GREEN |

| 2 | [Behavior description] | [test count] | GREEN |

### Test Coverage

- **Statements**: [%]

- **Branches**: [%]

- **Functions**: [%]

- **Lines**: [%]

### Code Metrics

- **Implementation**: [lines of code]

- **Tests**: [lines of test code]

- **Test-to-code ratio**: [ratio]

### Design Decisions

- [Notable decision made during implementation and why]

```

## TDD Principles to Follow

1. **Never write production code without a failing test first.** The test defines the requirement.

2. **Write the simplest test that could fail.** Complexity grows organically through accumulated tests.

3. **Write the simplest code that could pass.** The refactor phase is where elegance emerges.

4. **Tests are first-class code.** They deserve the same care in naming, structure, and readability.

5. **Small steps.** Each cycle should take 2-10 minutes. If it takes longer, the step is too big.

6. **One behavior per cycle.** If you find yourself writing two assertions about different behaviors, split the test.

## Test Quality Checklist

Before moving from RED to GREEN, verify the test:

- [ ] Has a descriptive name (reads like a sentence)

- [ ] Tests one specific behavior

- [ ] Would fail if the behavior were broken

- [ ] Does not depend on other tests (can run in isolation)

- [ ] Uses clear, readable assertions

## Error Handling

- **Test framework not detected**: Ask the user which framework to use, or check `package.json`

- **Existing tests fail before starting**: Report the failures; do not proceed until the baseline is green

- **Cannot make a test pass without major changes**: The step may be too large; break it into smaller behaviors

- **Flaky test**: Investigate non-determinism (timing, random data, shared state) and fix before continuing

## Best Practices

- Trust the process: writing minimal code feels wrong at first but leads to better design

- If you are tempted to skip the red phase, the feature is probably not well-understood yet

- If refactoring is hard, it usually means the implementation grew in the wrong direction -- let the tests guide you

- Keep test execution fast; slow tests break the TDD rhythm

- Commit after each green/refactor cycle for easy rollback

Related Skills

Skills that work well together

Explore More